The Deletion Delusion: Your Modern Data Platform is Probably failing compliance

Modern data architectures vs GDPR

There is a fundamental friction at the heart of modern data management: the widening chasm between legal fantasy and engineering reality. While your compliance/legal department assumes a "Right to Erasure" request is a simple SQL execution, every engineering lead knows the truth. Modern data platforms—built on ‘Big Data’ and ‘Lakehouse’ architectures—are optimized for append-heavy, read-intensive workloads. They were never designed for the selective, row-level mutations required by GDPR.

While most/every company claims compliance, the underlying architecture of modern data lakes often makes true erasure a technical impossibility or hard(read expensive). Most organizations are operating under a "deletion delusion," where data is merely hidden from the application layer while remaining physically immutable in the depths of S3 or other storage.

The Financial Language of Privacy—ALE

The biggest hurdle for privacy/platform engineering is securing a budget for a “Compliance Program.” To get the management’s attention, you must translate regulatory risk( practically - a city legend for management, until the are charged for lack of compliance) into Annual Loss Expectancy (ALE). This isn’t just a compliance metric; it’s your ROSI (Return on Security Investment).

The formula is: ALE = Probability of Failure (ARO) × Impact (SLE).

When calculating the Single Loss Expectancy (SLE), don’t just look at the fine. You must include engineering remediation costs, legal fees, and the “compute surge” required to fix the data post-incident.

The Financial Reality: If there is a 2% chance of a material GDPR failure and the SLE (fine + remediation + legal) is €5,000,000, your ALE is:

2% × €5M = €100,000 / year

If the cost to build a reliable automated shredder is €80,000, the program pays for itself. Without this quantitative model, you’re just an engineer asking for more “unproductive” budget.

GDPR Assumes You Know What PII Is (You Don’t)

The first point of failure isn’t a lack of intent; it’s a failure of Data Protection by Design. GDPR assumes you have a clear, static map of Personally Identifiable Information (PII). In a modern data platform, this is a hallucination.

Data classification is almost always incomplete, and in the complex web of modern pipelines, PII is transformed and re-created across 10+ systems. Even if you scrub a user_id, the user’s ghost persists via derived signals and indirect identifiers—session IDs, device fingerprints, and behavioral embeddings. AI systems can now re-identify individuals from data you never intentionally labeled as sensitive. If you can’t verify the location of every derived identifier, you are failing the accountability requirements.

Most companies are not non-compliant because they ignore GDPR. They are non-compliant because their architecture makes it (almost) impossible.

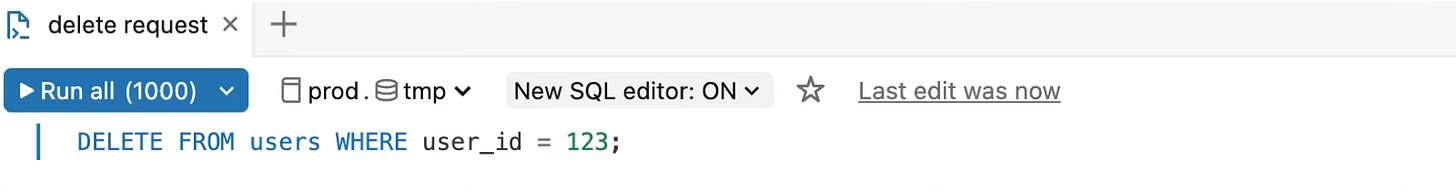

"DELETE" is a Lie in the World of Immutable Files

In traditional Relational Database systems, deletion is a predictable row-level transaction. In the OLAP/Lakehouse world, your SQL DELETE is a lie. Because these systems rely on immutable columnar formats like Parquet, a delete command doesn’t remove data; it triggers a cascading failure of storage efficiency.

Behind a “Logical Table: Deleted” checkmark, a standard delete command actually causes:

Massive I/O & Compute Spikes: The system must scan, filter, rewrite, and replace entire file groups.

S3 Tier Promotion: Deletion jobs often drag data from “Cold” storage tiers back to “Hot,” spiking your monthly cloud bill while failing to actually purge the data.

Passive Propagation: Deleted data persists in:

❌ Delta/Iceberg History: Transaction logs maintain the state for “time travel.”

❌ S3/Cloud Backups: Data lives on in secondary storage and snapshots.

❌ Stale Aggregates: Downstream summaries still contain the mathematical influence of the deleted records.

The AI Black Hole—Logs, Embeddings, and the Unknown

The most dangerous tier of the deletion hierarchy is the “AI/Unknown” tier. Modern humanity are currently feeding massive amounts of PII into Large Language Models (LLMs), creating a trail of logs and high-dimensional embeddings that are mathematically impossible to “un-learn” or selectively purge.

Passive cleanup is no longer enough. We need an AI Control Plane that moves privacy to active runtime enforcement. This control plane must at least:

Understand data sensitivity at the point of ingestion.

Enforce policies at the prompt/inference level.

Provide verifiable evidence of erasure across the entire AI lifecycle.

Conclusion: Beyond the Checkbox

True compliance requires a shift from “passive compliance” to “active privacy engineering.” A defensible strategy involves a phased rollout based on the priority hierarchy, such as for example:

Mandatory Right to Erasure (DDR) and Account Deletion.

Content deletion and retroactive backfills.

Addressing indirect identifiers (such as session IDs), full downstream propagation, and auditability.

Final Thought: Is your current “DELETE” button actually removing data, or is it just hiding it from view? The 2026 European Data Protection Board’s report makes it clear: regulators are no longer accepting “technical difficulty” or “immutable architecture” as valid excuses for data persistence. They are specifically targeting gaps in backup erasure and failed anonymization. If your data platform treats deletion as an afterthought, you aren’t compliant—you’re just lucky. For now.